ChatGPT is all the rage. It even drives some people into a rage. It does some remarkable things, it does some outrageous things, it does some absurd things. Most things it does badly, such as tell you how to build rockets. One thing it does—and I’m not sure which category to put this in—is define religion. It does so implicitly through its responses to various kinds of queries. In doing so, however, it reveals bias in the training sets and bias in the constraints put on it by developers.

ChatGPT is all the rage. It even drives some people into a rage. It does some remarkable things, it does some outrageous things, it does some absurd things. Most things it does badly, such as tell you how to build rockets. One thing it does—and I’m not sure which category to put this in—is define religion. It does so implicitly through its responses to various kinds of queries. In doing so, however, it reveals bias in the training sets and bias in the constraints put on it by developers.

Via jokes, ChatGPT chooses which religious traditions and figures deserve respect — and therefore what counts as ‘religion’

Exclude from home pageBNG staff | February 7, 2023

More Articles

-

Wissam al-Saliby named president of 21Wilberforce Global Freedom Center

NewsBNG staff

-

Kincaid will lead Baylor’s Institute for Faith and Learning

NewsBNG staff

-

Disinformation campaign uses ‘phony science’ to discredit trans people, researcher says

NewsJeff Brumley

-

The 1948 Deir Yassin massacre as a prequel to today’s war in Gaza

OpinionRaouf J. Halaby

-

Why did Haynes resign as head of Rainbow PUSH? ‘He did not have the full authority to actually do the job’

NewsMark Wingfield

-

Conference organizer calls Mark Driscoll to repent over lies about men’s event

NewsMark Wingfield

-

Demographic decline is upon us — what’s next?

AnalysisErich Bridges

-

The church could increase resilience in survivors

OpinionGeneece Goertzen-Morrison

-

Christians could bring down the heat on the gun debate, author says

NewsJeff Brumley

-

Supreme Court hears case that could empower cities to fine people for being homeless

NewsJeff Brumley

-

Ministry jobs and more

NewsBarbara Francis

-

What is going on at America’s elite universities?

OpinionMark Wingfield

-

The post-evangelicals take their next step forward

OpinionDavid Gushee, Senior Columnist

-

Florida joins Texas in adopting school ‘chaplain’ option denounced by chaplains

NewsJeff Brumley

-

A newspaper, a religion and a dance troupe producing misinformation

AnalysisRodney Kennedy

-

Toxic crusaders: The rise of the ultracrepidarian-turned-influencer

OpinionJ. Basil Dannebohm

-

Surrounded by trees: An Earth Day celebration and lament

OpinionKatherine Smith

-

How evangelicals promoted, then abandoned environmental stewardship

NewsSteve Rabey

-

As antisemitism and Islamophobia skyrocket, the effects ripple through politics

AnalysisTyler Hummel

-

Seminary asks court to dismiss Greenway’s defamation suit

NewsMark Wingfield

-

Bubba-Doo’s takes on the Caribbean — Part 1

OpinionCharles Qualls

-

Why we wrote a book on the life-giving stories of palliative care

OpinionJack Levison

-

Those who fear immigrant labor ‘are wrong and have always been wrong,’ Durbin says

NewsJeff Brumley

-

The war on pornography returns to the supply side

AnalysisMark Wingfield

-

Finding the gospel amid a ‘churchgoing bust’

OpinionBill Leonard, Senior Columnist

-

Wissam al-Saliby named president of 21Wilberforce Global Freedom Center

NewsBNG staff

-

Kincaid will lead Baylor’s Institute for Faith and Learning

NewsBNG staff

-

Disinformation campaign uses ‘phony science’ to discredit trans people, researcher says

NewsJeff Brumley

-

Why did Haynes resign as head of Rainbow PUSH? ‘He did not have the full authority to actually do the job’

NewsMark Wingfield

-

Conference organizer calls Mark Driscoll to repent over lies about men’s event

NewsMark Wingfield

-

Christians could bring down the heat on the gun debate, author says

NewsJeff Brumley

-

Supreme Court hears case that could empower cities to fine people for being homeless

NewsJeff Brumley

-

Ministry jobs and more

NewsBarbara Francis

-

Florida joins Texas in adopting school ‘chaplain’ option denounced by chaplains

NewsJeff Brumley

-

How evangelicals promoted, then abandoned environmental stewardship

NewsSteve Rabey

-

Seminary asks court to dismiss Greenway’s defamation suit

NewsMark Wingfield

-

Those who fear immigrant labor ‘are wrong and have always been wrong,’ Durbin says

NewsJeff Brumley

-

As delegates prepare for UMC General Conference, advocates outline their appeals

NewsCynthia Astle

-

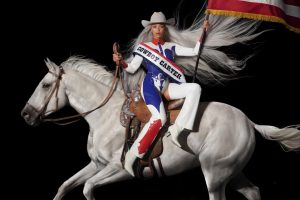

‘Cowboy Carter’ is a lot to process, but so is being Black in America

NewsCynthia Vacca Davis

-

Stained glass Black Jesus moves to Memphis

NewsKristen Thomason

-

Derek Webb finds new life in uncertainty

NewsMaina Mwaura

-

Ryan Burge explains ‘casual dechurching’

NewsJeff Brumley

-

The Freedom Caucus, source of conflict in Congress, has evangelical support

NewsSteve Rabey

-

Ministry jobs and more

NewsBarbara Francis

-

Experts detail how famine and disease are taking a second toll on Gaza

NewsJeff Brumley

-

Americans are not equally divided on culture wars, Robert Jones explains in BNG webinar

NewsJeff Brumley

-

Another court blocks DeSantis’ ‘anti-woke’ agenda as unconstitutional

NewsJeff Brumley

-

Former Southern Baptist pastor, an election denier, leads FRC Action’s ‘election integrity’ effort

NewsSteve Rabey

-

Rwanda: 30 years after the genocide

NewsAnthony Akaeze

-

McCammon and Emerson headline Friends of BNG dinner

NewsBNG staff

-

The 1948 Deir Yassin massacre as a prequel to today’s war in Gaza

OpinionRaouf J. Halaby

-

The church could increase resilience in survivors

OpinionGeneece Goertzen-Morrison

-

What is going on at America’s elite universities?

OpinionMark Wingfield

-

The post-evangelicals take their next step forward

OpinionDavid Gushee, Senior Columnist

-

Toxic crusaders: The rise of the ultracrepidarian-turned-influencer

OpinionJ. Basil Dannebohm

-

Surrounded by trees: An Earth Day celebration and lament

OpinionKatherine Smith

-

Bubba-Doo’s takes on the Caribbean — Part 1

OpinionCharles Qualls

-

Why we wrote a book on the life-giving stories of palliative care

OpinionJack Levison

-

Finding the gospel amid a ‘churchgoing bust’

OpinionBill Leonard, Senior Columnist

-

No one ever talks about how hard it is to come back from sabbatical

OpinionCourtney Stamey

-

An asset map shows how churches are meeting the needs of older adults in rural areas

OpinionJessica Lewis

-

The dangers of minority rule

OpinionMark Wingfield

-

Politics, faith and mission: A conversation with Randolph Hollerith

OpinionGreg Garrett, Senior Columnist

-

The Sunday I was transfigured

OpinionVictoria Robb Powers

-

‘You forgive us, we’ll forgive you,’ the theology of John Prine

OpinionJohn Burns

-

The antidote to mean Christianity

OpinionMartin Thielen

-

A musical playlist for commemorating Martin Luther King

OpinionKen Sehested

-

Why Josh Howerton makes me ashamed to be a complementarian

OpinionShannon Makujina

-

Dear Pastor Search Committee

OpinionAnonymous

-

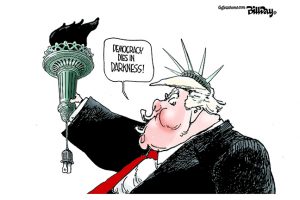

Democracy dies in darkness?

OpinionRichard Conville

-

The truth about lying

OpinionBrett Younger

-

A new book on the Bible and LGBTQ folks by a straight, white man is not what we need

OpinionSusan M. Shaw, Senior Columnist

-

The healing ‘heresy’ of Richard Hays

OpinionBrandan Robertson

-

Grace Valentine, ice cream and sources of empowerment for women in complementarian circles

OpinionMallory Challis

-

Politics, faith and mission: A conversation with Jillian Mason Shannon

OpinionGreg Garrett, Senior Columnist

-

A Great Evangelical Divorce? — Speaker Mike Johnson’s breakup with Marjorie Taylor Greene Could Be Bad News for Trump

Curated

Exclude from home pageBNG staff

-

Meet the sex educators challenging what we think we know about sex and Islam

Curated

Exclude from home pageBNG staff

-

Spain approves plan to compensate victims of Catholic Church sex abuse. Church will be asked to pay

Curated

Exclude from home pageBNG staff

-

Biden To Florida Voters: Six-Week Abortion Ban Is Trump’s Fault

Curated

Exclude from home pageBNG staff

-

Evangelical Leaders Lobbied House Speaker For Israel And Ukraine Aid

Curated

Exclude from home pageBNG staff

-

Early Christian Scripture and ancient codices draw collectors’ eyes to Paris

Curated

Exclude from home pageBNG staff

-

Iowa lawmakers address immigration, religious freedom and taxes in 2024 session

Curated

Exclude from home pageBNG staff

-

What cities can learn from Seattle’s racial and social justice law

Curated

Exclude from home pageBNG staff

-

For the Warming of the Earth: Worshiping in the Age of Creation Care

Curated

Exclude from home pageBNG staff

-

‘Present And Future Of The Russian World’: Inside The Document That Has Rocked Orthodoxy

Curated

Exclude from home pageBNG staff

-

Europe’s Jews expand security program as they grapple with antisemitic fallout of Gaza war

Curated

Exclude from home pageBNG staff

-

Review: A Faith of Many Rooms

Curated

Exclude from home pageBNG staff

-

3 things to learn about patience − and impatience − from al-Ghazali, a medieval Islamic scholar

Curated

Exclude from home pageBNG staff

-

100+ arrested in day of unrest and mass protest at Columbia U over Gaza and Israel

Curated

Exclude from home pageBNG staff

-

Animal cruelty officer-turned-animal chaplain Matty Giuliano loves ferrets and St. Francis

Curated

Exclude from home pageBNG staff

-

Who Believes in the Prosperity Gospel?

Curated

Exclude from home pageBNG staff

-

‘The Hopeful,’ film about Adventist origins, debuts in theaters

Curated

Exclude from home pageBNG staff

-

Finding an Uncontainable God Within Finite Poetic Spaces

Curated

Exclude from home pageBNG staff

-

To one early 20th century anti-Zionist rabbi, Passover isn’t simply a celebration of freedom — it’s also a warning

Curated

Exclude from home pageBNG staff

-

Going to the Neighborhood: Post-COVID Community-Based Youth Ministry

Curated

Exclude from home pageBNG staff

-

Haitians Are Ministering at the End of the World

Curated

Exclude from home pageBNG staff

-

In time for Passover, the first Ukrainian-language Haggadah goes to print

Curated

Exclude from home pageBNG staff

-

Indian protesters pull from poetic tradition to resist Modi’s Hindu nationalism

Curated

Exclude from home pageBNG staff

-

How A Small Evangelical Seminary Is Defying The Odds

Curated

Exclude from home pageBNG staff

-

A memoir explores a shattering childhood and narrow escape

Curated

Exclude from home pageBNG staff